I have recently submitted a paper based on some work I have been doing at my job at the Embodied Cognition Lab at Lancaster University. In it, we look at a large set of linguistic distributional models commonly used in cognitive psychology, evaluating each on a benchmark behavioural dataset.

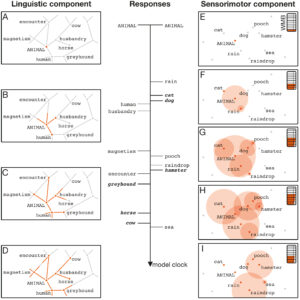

Linguistic distributional models are computer models of knowledge, which learn representations of words and their associations from statistical regularities in huge collections of natural language text, such as databases of TV subtitles. The idea is that, just like people, these algorithms can learn something about the meanings of words by only observing how they are used, rather than through direct experience of their referents. To the degree that they do, they can then be used to model the kind of knowledge which people could gain in the same way. These models can be made to perform various tasks which rely on language, or predict how humans will perform these tasks under experimental conditions, and in this way we can evaluate them as models of human semantic memory.

We show, perhaps unsurprisingly*, that different kinds of models are better or worse at capturing different aspects of human semantic processes.

A preprint of the report is available on Psyarxiv.

*unsurprising to you as you read this, perhaps, but actually this is the largest systematic comparison of models as-yet undertaken, and thereby the first to actually effectively weigh the evidence on this question.